- Three key limitations, variable stain colour, overlapping deposits, and noncircular stains, can distort area, density, and droplet size estimates.

- Granulometry, dilation and erosion, and distance transforms help separate overlaps, identify nuclei, and estimate size distributions and deposit density.

- Percent area covered is the most reliable metric; resolution has minor effect, while measurement variability across software is about +/-4%.

- Deposit counts and droplet size estimates are sensitive to resolution and overlap; droplet size inference is highly questionable without controlled conditions.

- Standardize materials and protocols: use the same WSP brand and batch, identical software and analysis workflow, and report methods transparently.

This text was generated by OpenAI GPT 5 Mini

This is the final part of our three-part article discussing methods for digitizing and processing water sensitive paper. You can read part one here and part two here.

Morphological operations

We can now move on to the larger shapes, or “morphology” of the objects in our binary image. Our goal is to quantify deposits by interpreting these shapes. Once again, these operations are powerful processing tools, but we must acknowledge three overriding limitations:

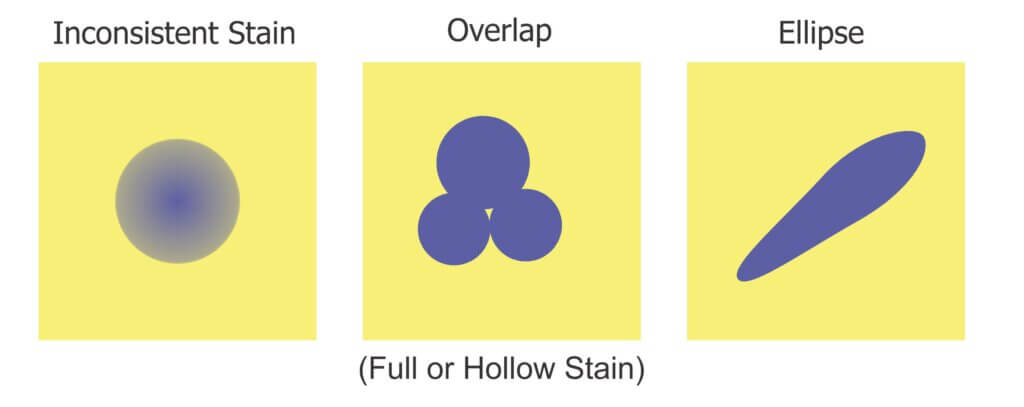

1. Inconsistent stains

Sometimes deposits do not create a consistent blue colour – they can get lighter or take on a greenish-yellow hue towards the perimeter of the stain. During thresholding, the outer edge can be accidently eroded, leaving behind an object with a jagged edge. This may lead us to underestimate the percent area actually covered. In the case of tiny stains, it might eliminate them entirely and lead us to underestimate deposit density.

2. Overlaps

It can be difficult to determine if an object represents a stain from a single droplet or is the result of multiple, overlapping deposits. This becomes significant when the surface of the WSP exceeds ~20% total coverage. The resulting objects may or may not have hollow centres where droplets do not overlap entirely. Misidentifying overlaps leads us to falsely conclude that an object is the result of a single, coarser droplet rather than multiple finer droplets.

3. Ellipses

Non-circular stains are formed when droplets scuff along the surface. Two droplets with the same volume encountering a paper at different angles can create stains with significantly different areas. We may wrongly conclude that the droplets that created them were coarser than they truly were. One approach is to use Feret’s Diameter (aka Caliper Diameter) by measuring the widest spans on the X and Y axes and taking the average. Another approach is to interpret the ellipse as a series of circular stains. Or we can decide to only acknowledge these objects when calculating percent area covered, but omit them when calculating deposit density or predicting original droplet size. Each strategy is a compromise, so it is important to be consistent and transparent when reporting results.

We’ll explore two morphological operations that can help us separate fact from fiction: Granulometry and Dilation-and-Erosion. We’re introducing these operations as part of the processing and detection step, but they may also overlap with the measurement step in our three-step process.

Granulometry

We can estimate the range of object sizes and get a sense of how they are distributed on the paper by filtering or “sieving” the image. Imagine pouring a mixture of sand and rocks through a series of ever-finer sieves. Doing so allows you to separate particles based on size exclusion. A granulometry function compares each object to a series of standardized objects with decreasing diameters. This isolates objects of a similar size and bins them in that size range. This is a powerful operation, but accuracy is lost when stains overlap to form larger objects. In this case, we move on to Dilation and Erosion.

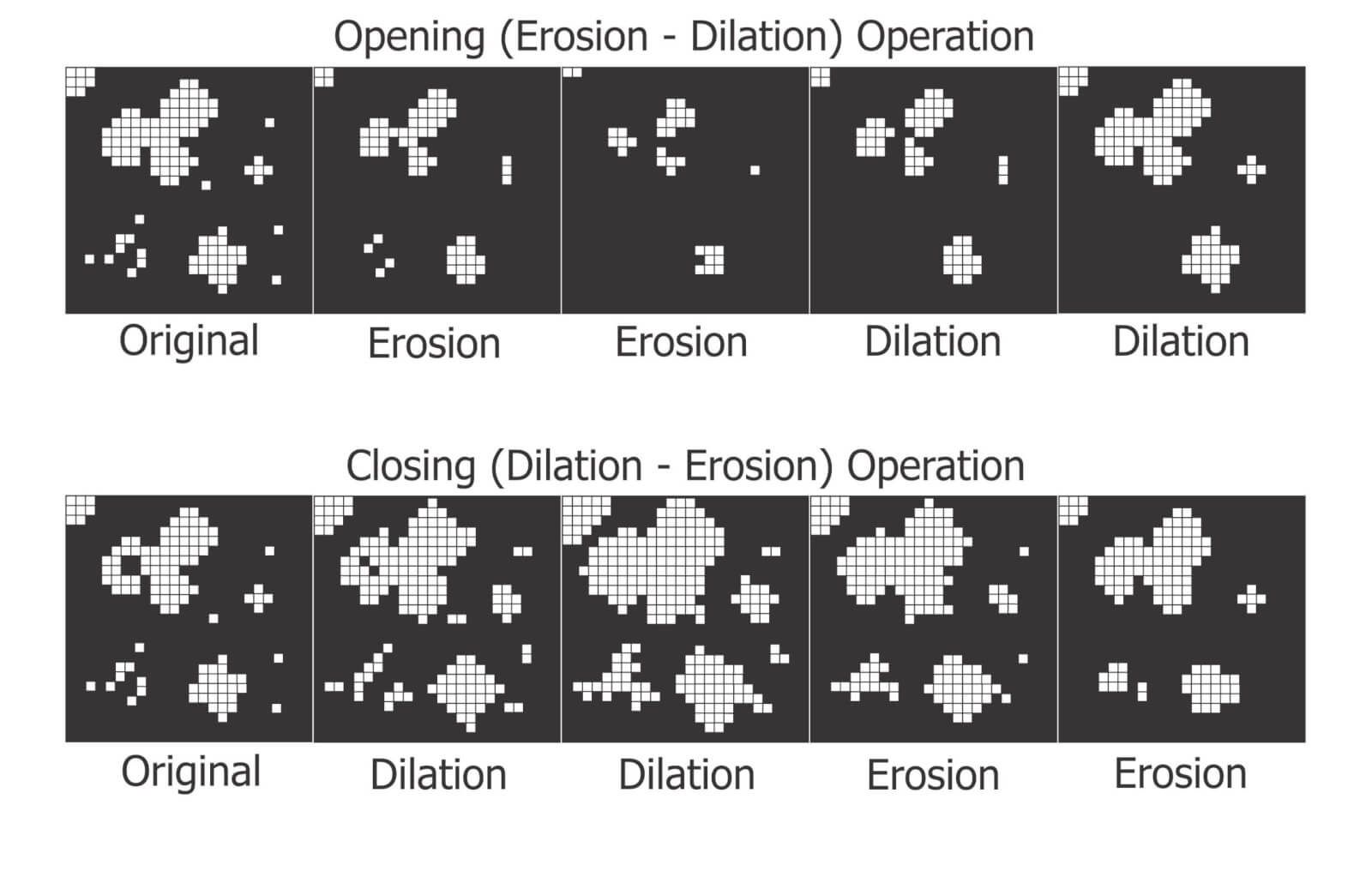

Dilation and Erosion

Think of dilation as adding pixels to the boundary of an object. This makes tiny objects bigger, fills in any interior holes and can cause objects to merge. The number of pixel-wide dilations required to make objects contact one another can be used as a measure of deposit density.

Erosion removes pixels from the outer (and sometimes inner) boundaries of an object. This eliminates tiny artifacts that may not actually represent stains. It can also split non-circular objects into multiple parts before shrinking them into multiple nuclei (aka centroids). These last-remaining points are not necessarily the centre of a stain, but the pixels furthest away from the original boundary.

When a non-circular shape has more than one nucleus, they likely represent individual droplets that combined to form the larger stain. We can then use these nuclei to measure deposit density, such as in a Voronoi partition which triangulates each nucleus in relation to the two closest neighbours.

Many image processers use both these operations sequentially. When an image is eroded and then dilated (a process called “Opening”), smaller objects are removed, leaving the area and shape of remaining objects relatively intact. Dilating and then eroding (a process called “Closing”) fills in small holes and merges smaller objects, once again leaving the area and shape of remaining objects relatively intact. We can use both of these functions to help smooth an image prior to measurement.

Distance Transformations

Distance transformations are advanced operations specifically used to separate objects that are densely packed. While not typically used when analyzing WSP, distance transformations are another means of identifying object nuclei. They are another means for teasing apart objects that are likely the result of overlapping deposits and then mapping their relative sizes and positions.

Measurement

The calculation of the area covered by deposits is straightforward. The pixels belonging to objects (the deposits) and those belonging to background are summed and then the fraction is converted to percent area covered. Research has shown that the image resolution does not significantly impact percent coverage assessments and has suggested that all image analysis software tends to produce similar results (+/- 3.5% observed when the same threshold was applied to multiple papers). This is acceptable because it’s within the variability inherent to spraying.

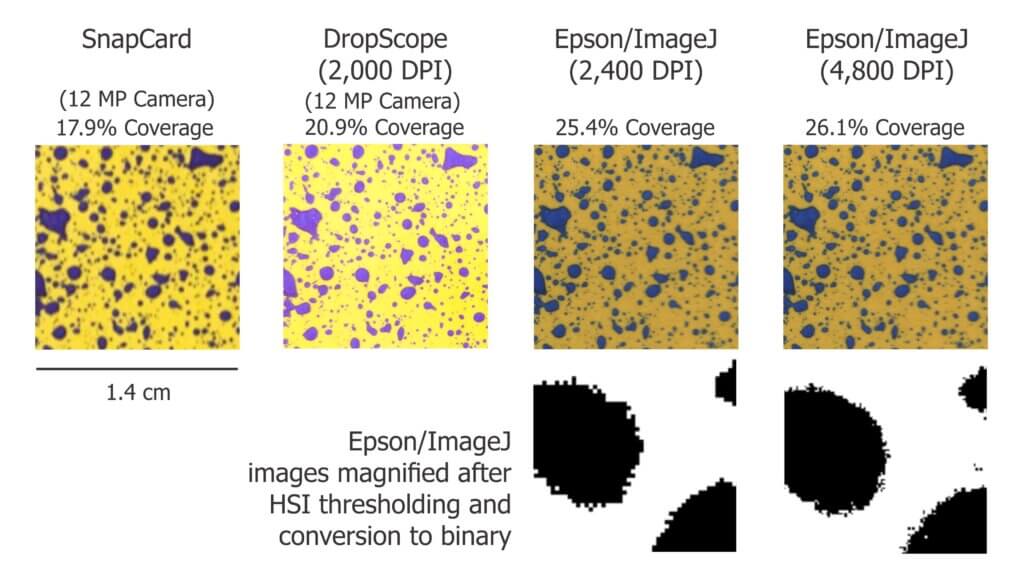

We ran a similar experiment wherein we analyzed the same piece of WSP using four methods. Here are a few facts about the software we used:

- DropScope produces images between 2,100 and 2,300 DPI. Currently, it ignores ellipses and doesn’t count anything spanning less than ~35 µm (3 pixels).

- We set ImageJ to ignore any object spanning less than 3 pixels, which at 2,400 DPI was 30 µm in diameter.

- We are unaware of Snapcard’s processing methods except that the software was benchmarked using ImageJ. Developers note it will underestimate the percent area covered if the image is out of focus. (Note: As of 2026, this app may no longer be supported by the GRDC).

The images shown in the figure below were cropped from screenshots produced by each method. The actual ROI analyzed was ~3 cm2 for SnapCard, 3.68 cm2 for DropScope and 2.0 cm2 for both Epson/ImageJ methods. Our results indicate an +/- 4% difference in percent area coverage. This variability reflects the results of a 2016 journal article that compared SnapCard with ImageJ and other leading analytical software. That study claimed no statistically significant difference in percent coverage detected (standard deviations were about 20%). However, the ImageJ results tended to trend several percent higher than SnapCard. We saw this as well. And so, while resolution may not have a significant impact on percent area covered, there does appear to be some correlation.

Resolution definitely affects deposit counts. Particularly in applications that employ finer droplets. Consider the difference between detecting or missing 1,000 30 µm diameter objects. It may only amount to a fraction of a percentage of the surface covered, but +/- 1,000 objects on a 2 cm2 area is significant in terms of deposit density.

Output

Once a WSP image (or set of images) has been scanned, pre-processed, processed and measured, we will receive some manner of output. Some software packages create an attractive report with images, graphs and key values. These reports include percent coverage and many provide droplet density. Deposits may be binned by size, or spread factors are used to calculate the original droplet diameters and even estimate the volume applied by area. Other software packages provide raw data that can be imported into a statistical program or spreadsheet program like Excel for further analysis. Some software packages provide both.

How far can we take this?

Blow-by-blow data analysis is beyond the scope of this document, but how much weight should we give to coverage data obtained using WSP? The answer depends on the metric in question, but in all cases we must first acknowledge the three overriding caveats. Take it as said that they apply to everything that follows:

- Different brands (and even different production runs) of WSP can produce significantly different coverage metrics. When conducting experiments, use a single brand of WSP. Better still, use papers from the same production batch whenever possible.

- The same of piece of sprayed WSP can produce significantly different results depending on the software and protocol used to analyze it. When conducting experiments, use the same software and assessment protocol and be transparent about the process when communicating results.

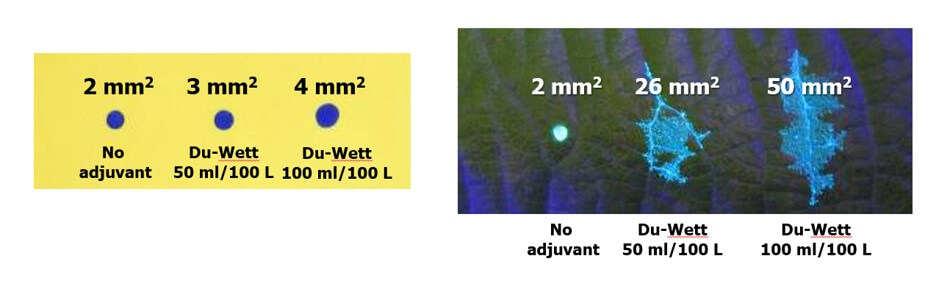

- WSP coverage may not reflect the coverage achieved on an actual plant tissue surface. It is suitable as a relative index (I.e. papers can be compared to papers, but not to tissues) but the spread factor changes with surface wettability and the surface tension of the liquid sprayed. Note the differences in percent area covered in the following experiment with an organosilicone super-spreader:

Recall that we started this document by listing the four pieces of information commonly sought using WSP. They were listed in order of reliability, and now we can explain why.

- The percent surface area covered: We have established that this is the most reliable piece of data. Droplets do not spread on WSP the way they do on plant surfaces, so it will underestimate actual coverage. The results vary by analytical method, but it’s likely not dependent on resolution and still falls within the variability inherent to spraying. This metric gives us valuable and actionable information. We can say whether or not we hit a target, and evaluate whether a sprayer change resulted in more or less deposit.

- The density of deposits on the target area: We have established that that there are limits to the reliability of this metric. It is affected by the analytical method used and can be greatly underestimated when resolution is poor or when deposits overlap in high numbers. Also, it will never reliably reflect deposits under 30 µm. Nevertheless, under controlled conditions this information does have value and is of great interest in enquiries about drift and contact fungicides.

- The size of the droplets that left the stains: This metric is highly questionable except under controlled conditions. The many assumptions about surface tension, droplet speed, and droplet evaporation make it impossible to make definitive statements about spray quality. Finer droplets are greatly underestimated in this equation. Therefore, while there may be some value in using WSP as a relative index, this metric is a crude indication at best.

- The dose applied to the target surface: This metric has not been discussed up to this point, but is quickly and easily dismissed. Let’s assume that a droplet with a high concentration of an active ingredient will leave a stain that is the same area as another droplet with a lower concentration. This will lead some to suggest that as long as the original concentration is known, we can back-calculate the dose (which is the amount of active on a given area). However, one droplet has the same volume as eight droplets that are half it’s diameter. This cubic relationship means that if they all deposit, the larger droplet will cover roughly 1/2 the surface area as the eight smaller droplets. Therefore, the smaller droplets spread the same amount of active over a greater area. Spread factor muddies this a bit, but ultimately it means that dose cannot be estimated from area covered. Dose is better assessed using collectors that permit the residue to be removed, such as Petri dishes, Mylar sheets, pipe cleaners, alpha cellulose cards, or glass slides.

And so, the image analysis process described here is powerful and effective when used with water sensitive paper as long as the limitations are acknowledged. The same process can also be used with dyes and specialized collectors such as Kromekote to permit even greater resolution. But that’s another story.

References (Further reading)

Bankhead, P. 2014. Analyzing fluorescence microscopy images with ImageJ.

Cunha, J.P.A.R., Farnese, A.C., Olivet, J.J. 2013. Computer programs for analysis of droplets sprayed on water sensitive papers. Planta Daninha, Viçosa-MG. 31(3): 715-720.

Ferguson, J.C., Chechetto, R.G., O’Donnell, C.C., Fritz, B.K., Hoffmann, W.C., Coleman, C.E., Chauhan, B.S., Adkins, S.W. Kruger, G.R., Hewitt, A.J. 2016. Assessing a novel smartphone application – SnapCard, compared to five imaging systems to quantify droplet deposition on artificial collectors. Computers and Electronics in Agriculture. 128: 193-198.

Ledebuhr, M. 2016. Small Drop Sprays.

Marçal, A.R.S., Cunha, M. 2008. Image processing of artificial targets for automatic evaluation of spray targets. Trans. of the ASABE. 51(3): 811-821.

Moor, A., Langenakens, J., Vereecke, E., Jaeken, P., Lootens, P., Vandecasteele, P. 2000. Image analysis of water sensitive paper as a tool for the evaluation of spray distribution of orchard sprayers. Aspects of Applied Biology. 57.

Panneton, B. 2002. Image analysis of water‐sensitive cards for spray coverage experiments. Applied Eng. in Agric. 18(2): 179‐182.

Salyani, M., Zhu, H., Sweeb, R.D., Pai, N. 2013. Assessment of spray distribution with water-sensitive paper. Agric. Eng. Int.: CIGR Journal. 15(2): 101-111.

SnapCard website. University of Western Australia and the Department of Primary Industries and Regional Development, Western Australia. (Note: As of 2026, may no longer exist).

Syngenta. 2002. Water‐sensitive paper for monitoring spray distributions. CH‐4002. Basle, Switzerland: Syngenta Crop Protection.

Turner, C.R., Huntington, K.A. 1970. The use of a water sensitive dye for the detection and assessment of small spray droplets. J. Agric. Eng. Res. 15: 385-387.